CES 2026 was crowded with humanoids doing simple household tasks such as folding laundry or stacking up the dishwasher. One thing I was sure of seeing this influx of robots at the world’s biggest tech event, was that such service bots are going to be the next big thing invading our households in the near future.

Staying with that thought, the robotics industry, for now, faces the biggest challenge in teaching robots to operate in the messy real world. The unstructured environment means robots need massive amounts of data to learn. Gathering and structuring that data is the costliest thing in robotics and perhaps the biggest impediment, slowing the entire development process.

Designer: DreamDojo

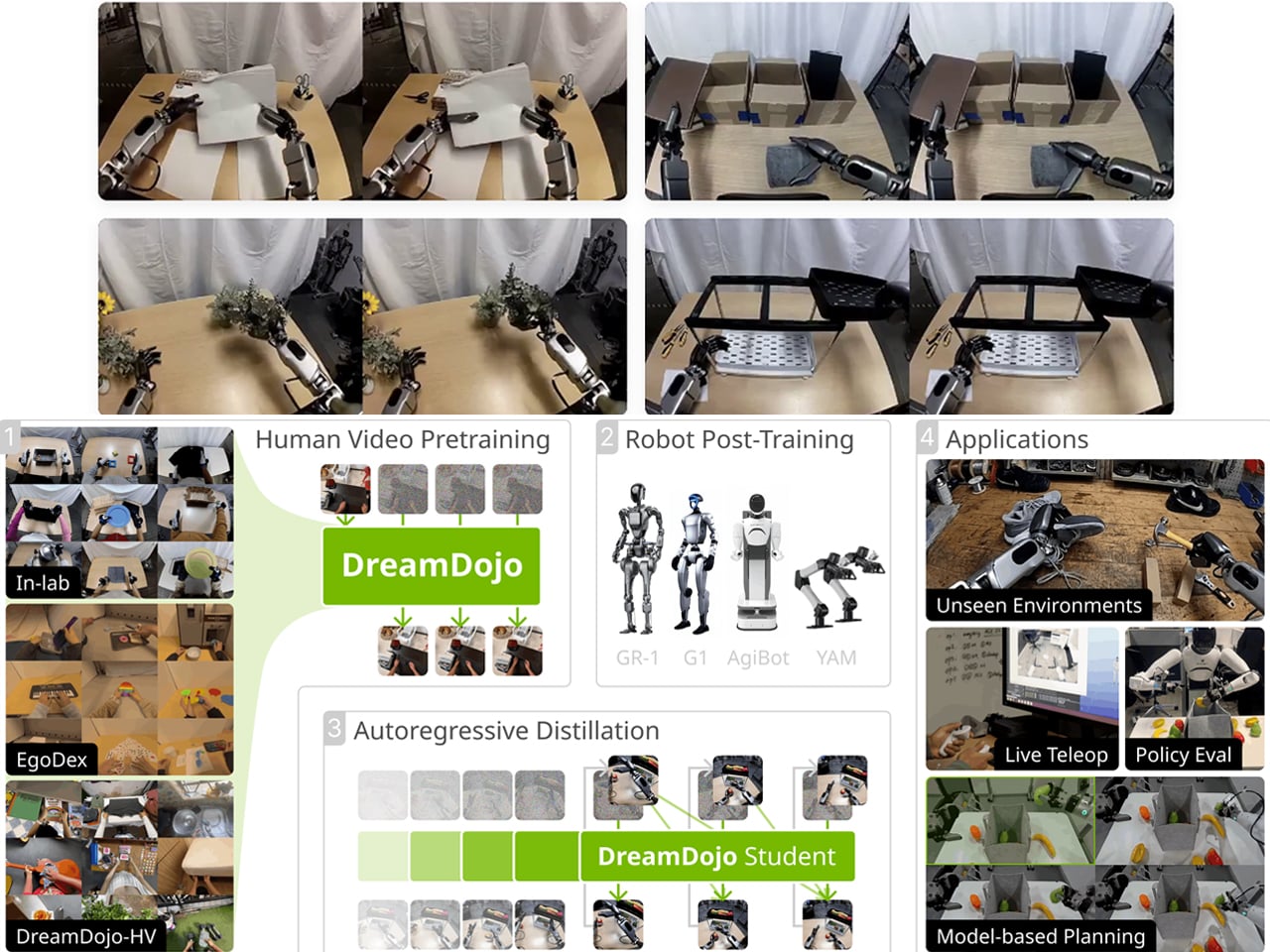

NVIDIA believes it has created a workaround. The company has released DreamDojo, an open-source “world model,” which intends to help robots learn intuitive physics to interact in the physical world by seeing humans do it first. So, instead of relying on painstaking programming or teleoperating robots, Nvidia DreamDojo would allow robots to train on 44,000 hours of egocentric human video, which shows humans handling tools, assembling objects, and doing laundry.

NVIDIA terms this open-source world model as the “largest dataset to date for world model training.” The dataset is called DreamDojo-HV (Human Video) and comprises exactly 44,711 hours of footage, which includes 6,015 unique tasks and more than a million trajectories. This works in two independent phases and is billed by Nvidia to be 15 times larger and about 96 times more skill-packed. It is also believed to include 2000 times more scenes than ever seen in the previous largest datasets for world model training.

Two-phase robotic course for being human

Of course, collecting robot-specific data is the biggest bottleneck in the industry. By simplifying that with abundant human video, Nvidia is trying to make learning convenient and cheaper for robotic companies betting on humanoids. For me, this possibility of learning through seeing before touching physical objects is compelling. And for its execution is divided into two phases: Pre-Training and Post-Training.

Firstly, it pre-trains on large-scale human video using what Nvidia says is “latent actions.” Since human videos do not provide joint torque labels or motor commands, Nvidia has trained a “700-million-parameter spatiotemporal Transformer” to extract “proxy actions” from visual changes between frames, allowing the model to “treat any human video as if it came with motor commands attached.” Secondly, it post-trains on a specific robot body with “continuous robot actions.” The idea is to separate physical understanding from hardware control, so that the robot learns the rules of the physical world first and then adapts them to need and limb requirements.

Real-time dreaming

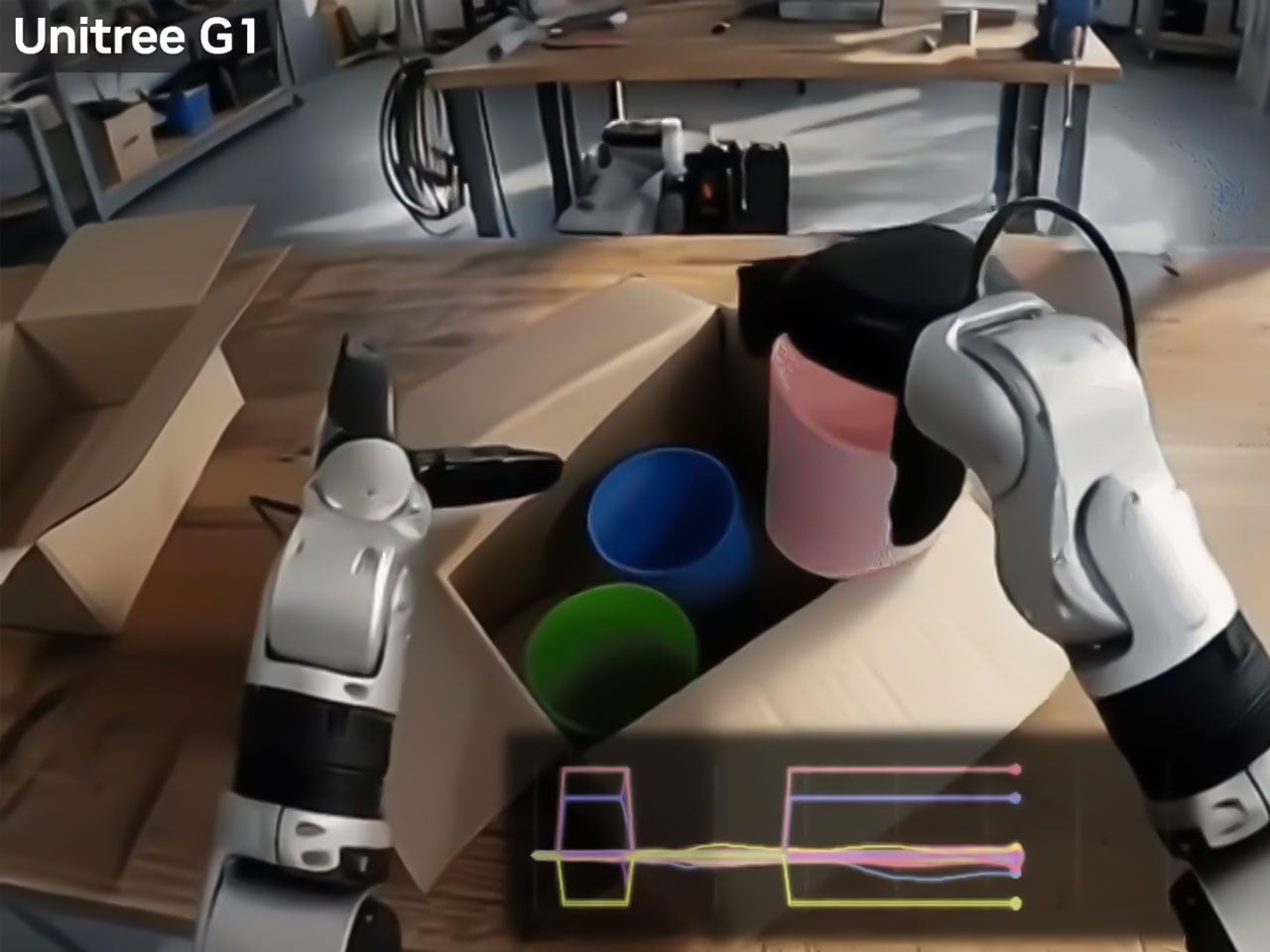

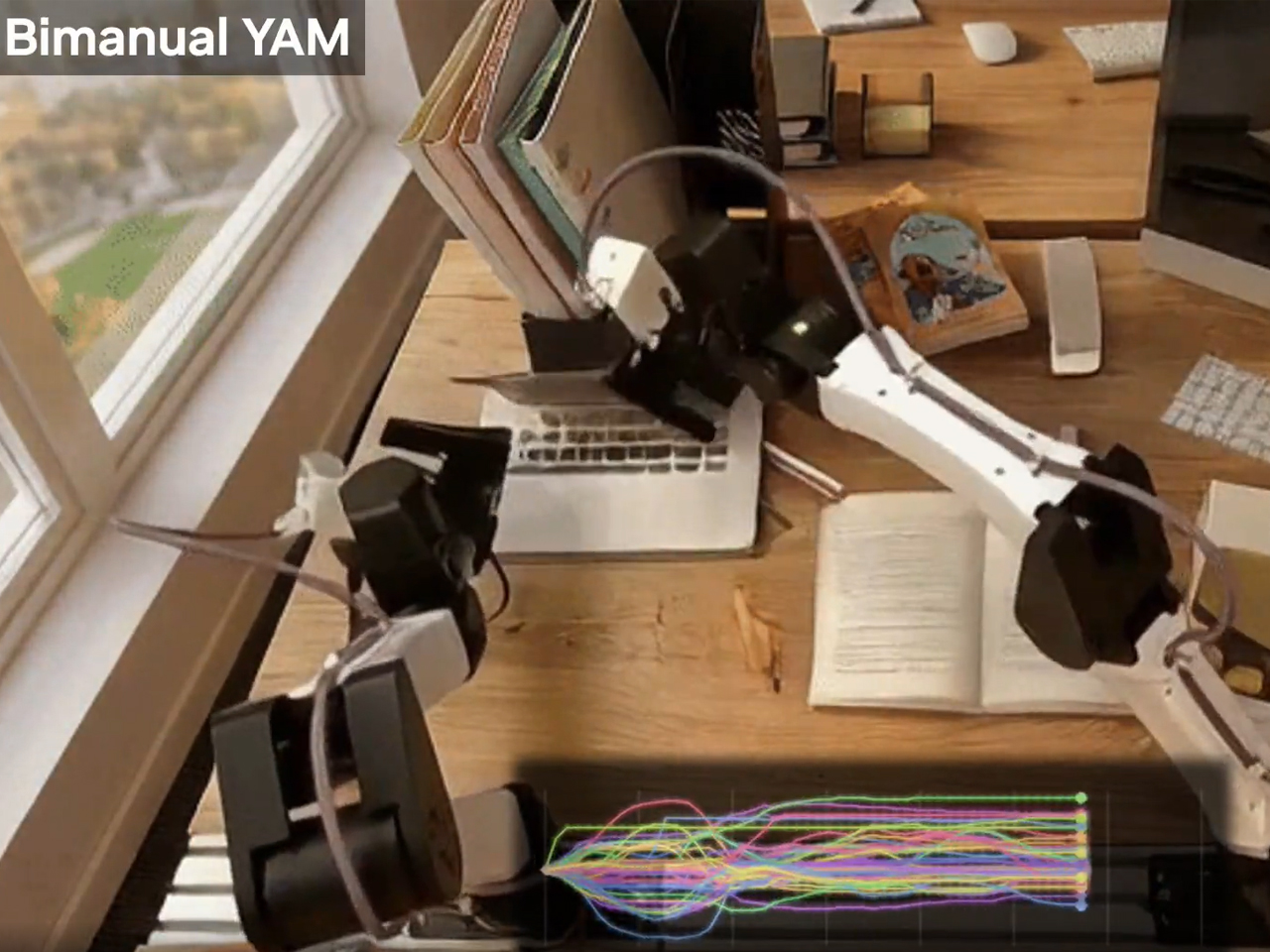

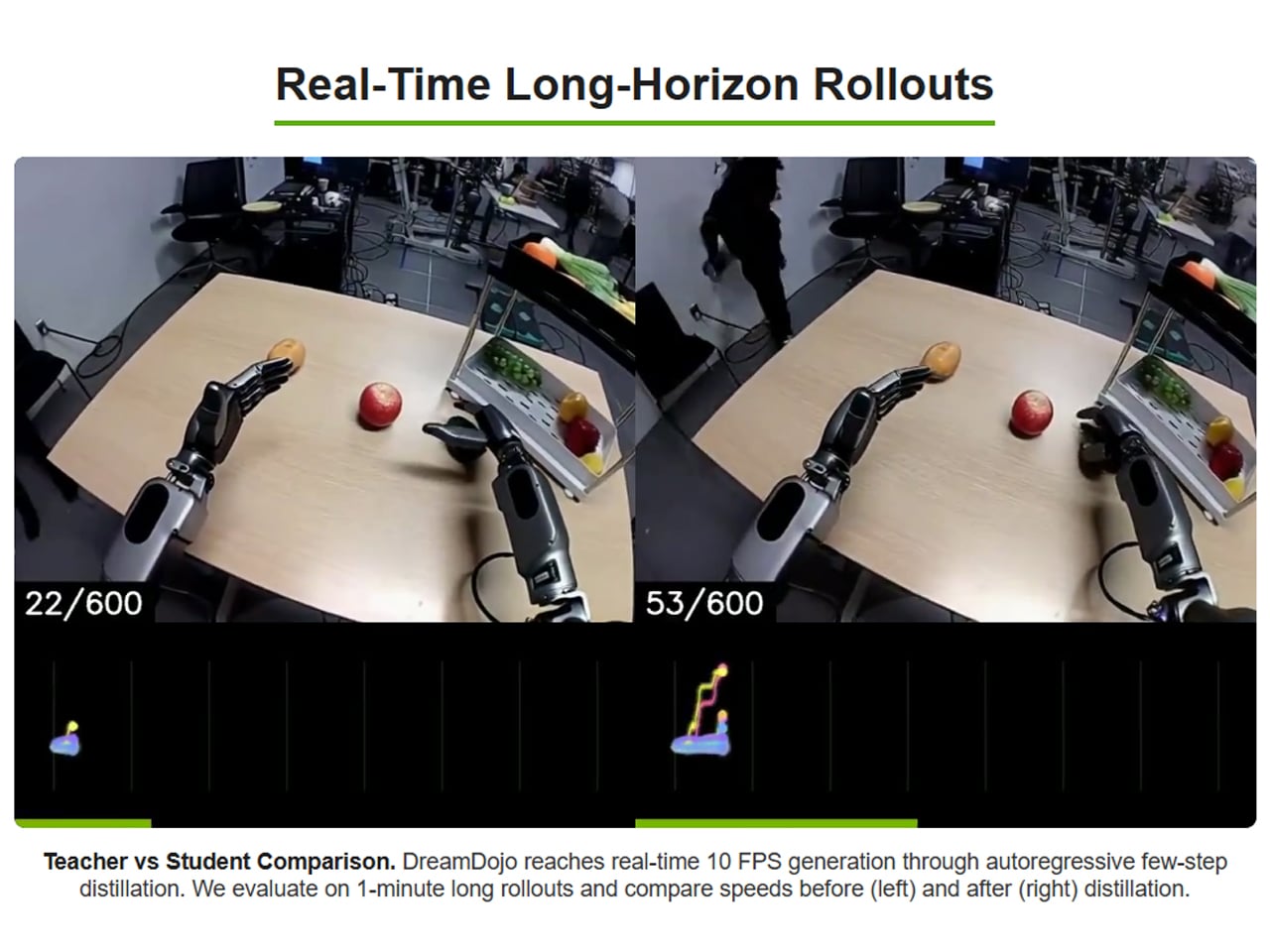

With its world model designed to teach robots to watch humans first, Nvidia is suggesting to us that the best and fastest way to scale humanoids isn’t more robot data. It is probably their exposure to more human experience. Considering this, it’s imperative to note that this is not the first world model. Many have been devised before, but they have been considerably slower at achieving the outcome. NVIDIA has been able to clock up the pace by distilling DreamDojo to run at 10.81 frames per second in real time for over a minute. DreamDojo HV has been demonstrated across humanoid platforms like GR-1, G1, AgiBot, and YAM robots, the company says, and has shown what it calls “realistic action-conditioned rollouts” across diverse environments and object interactions.

From what I see, if DreamDojo can work as the press information reveals, it could make life easier for startups and robotic teams with limited resources to collect a large robot-specific dataset and use it to teach their robots. But before more use case scenarios trained on the Nvidia world model show up, I am skeptical how they will perform in every changing real-world condition, which are not absolutely the same at any two moments.