Too Little, Too Early… The Wehead has a long way to go before it can be taken seriously, on both hardware and software fronts.

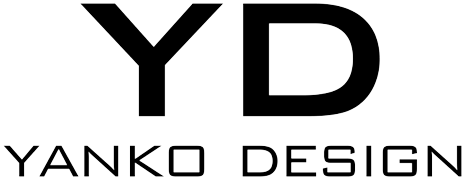

As we coursed the floors of the Showstoppers event at CES, my eyes landed on something familiar. I made eye contact (to the best of my ability to make eye contact with a set of virtual eyes) with the $5000 Wehead device, which I had just reported on just mere weeks ago. It sat on a lone table in the corner of the massive ballroom where the event was being held, with a few people basically gathering the courage to talk to it. Obviously, I wanted to really get a sense of what it was like to chat with an AI, but also to see whether this $5000 device was worth the hype. Long story short, the Wehead was a bit of a mess from top to bottom. The hardware lacked the kind of finesse you’d expect from a premium product, and the software failed miserably at processing requests amidst the buzz of all the people around it.

Designer: Wehead

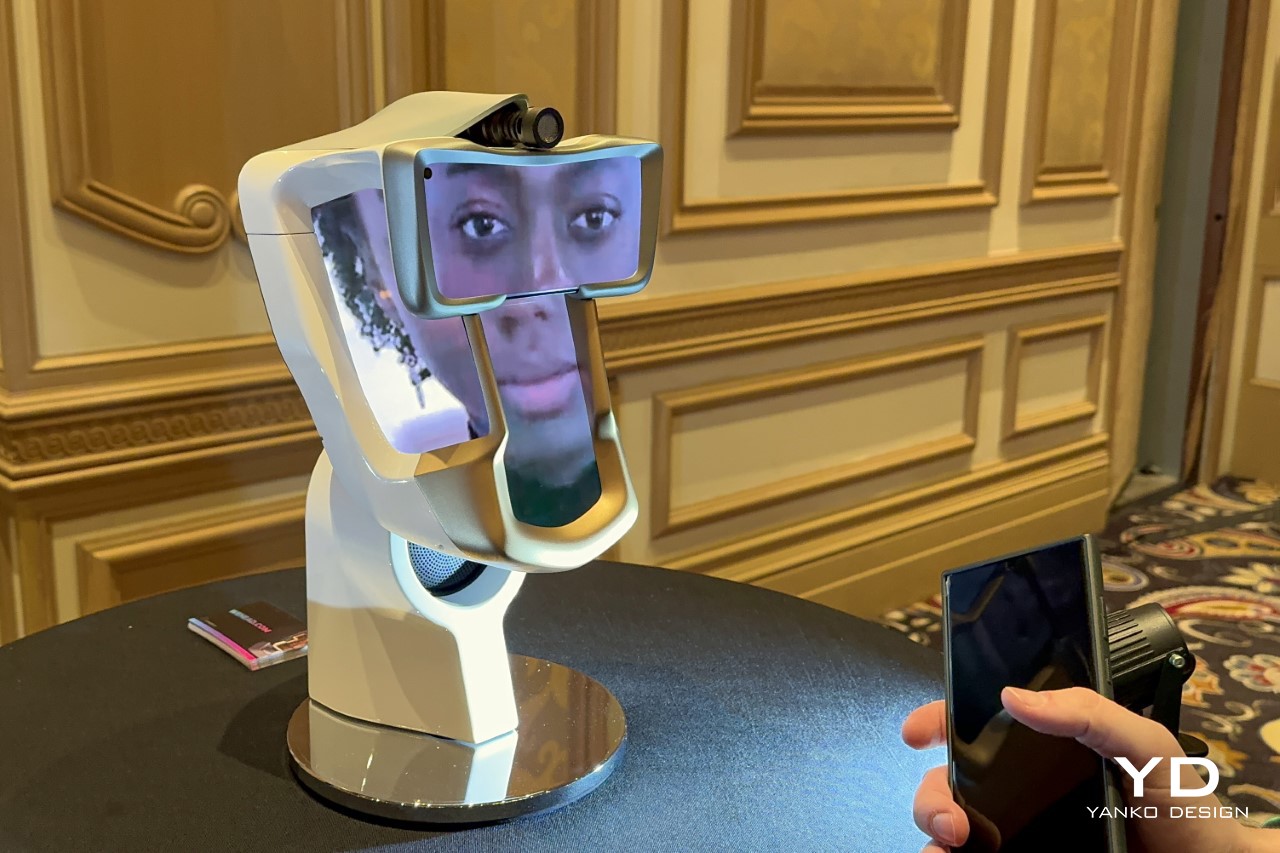

The Wehead was first envisioned as a one-of-a-kind teleconferencing device that could allow you to speak to people via video-chatting apps, but instead of staring at a screen, have you stare at a head that moved and responded to the actions of the person on the other end of the call. Somewhere down the line, the company made its transition to turning it into a ChatGPT-esque assistant that would use AI to answer queries and augment life. The difference between the Wehead and something like ChatGPT, Siri, or Google Assistant? The fact that Wehead actually had a face, which, at least in theory, would add a more immersive, believable aspect to the entire experience.

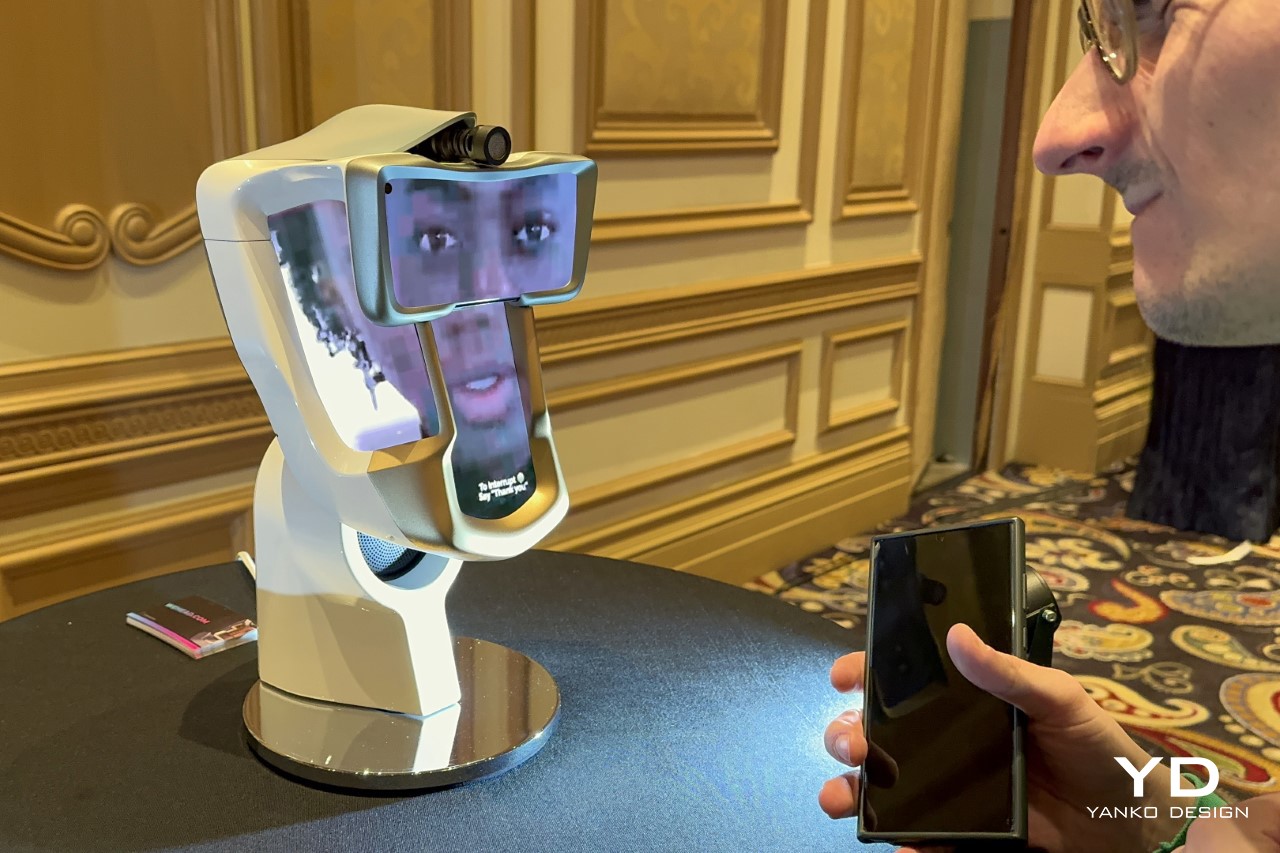

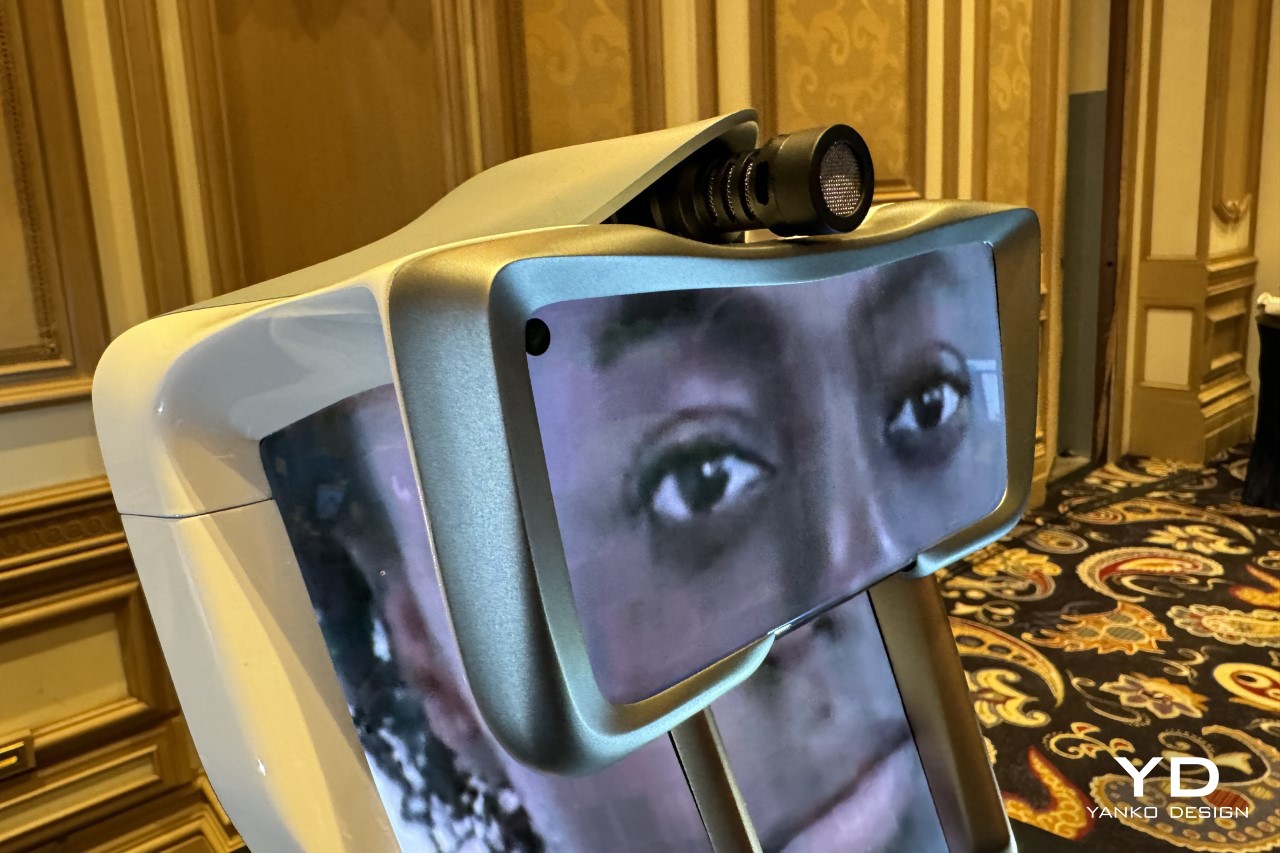

The problem, however, lay in two broad domains – firstly, the Wehead was a solution in search of a problem. The lack of a facial component to AI may be a problem, but it isn’t a problem that demands a $5000 multi-screen bionic robot. Secondly, even if that were true, the Wehead itself was a rather shoddily assembled device, using four mobile phones, a shotgun mic, and a speaker to give ChatGPT an anthropomorphized touch.

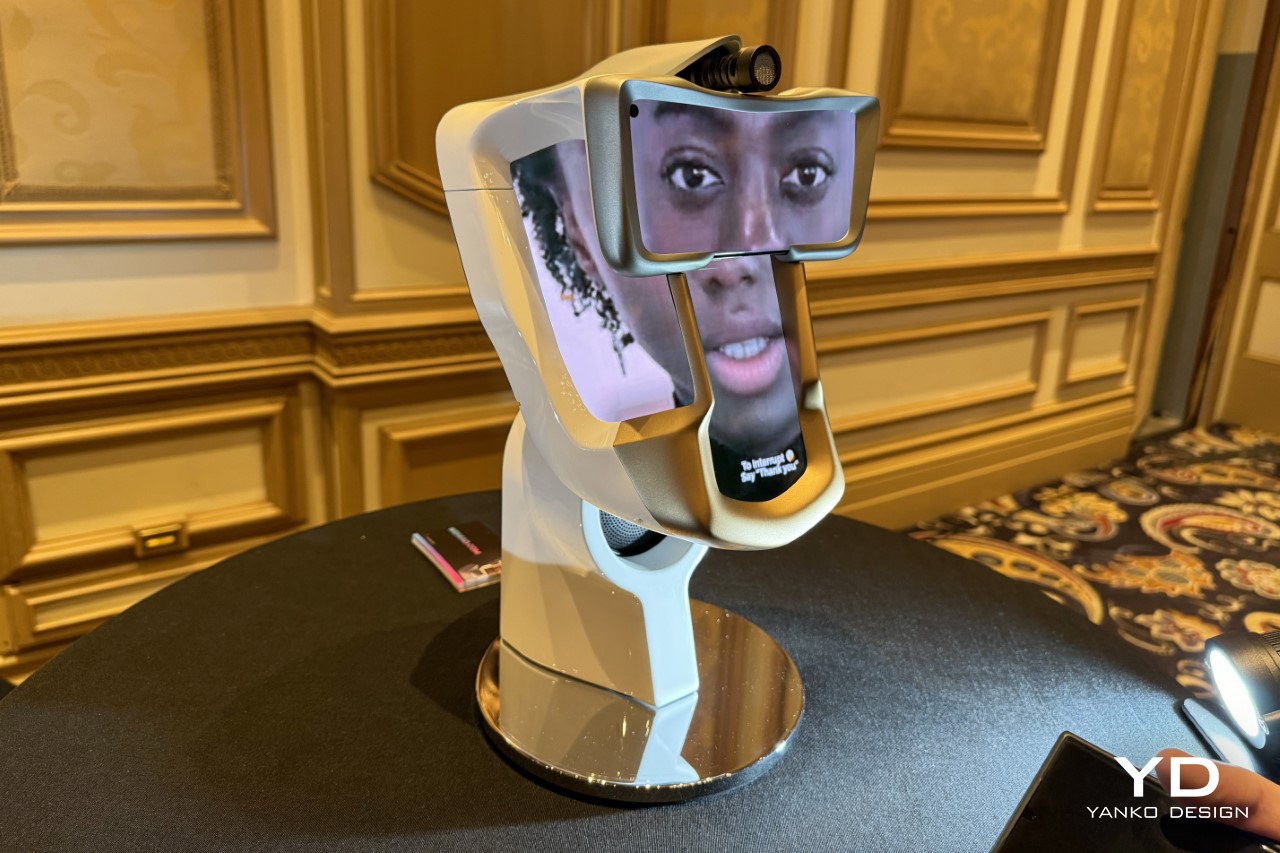

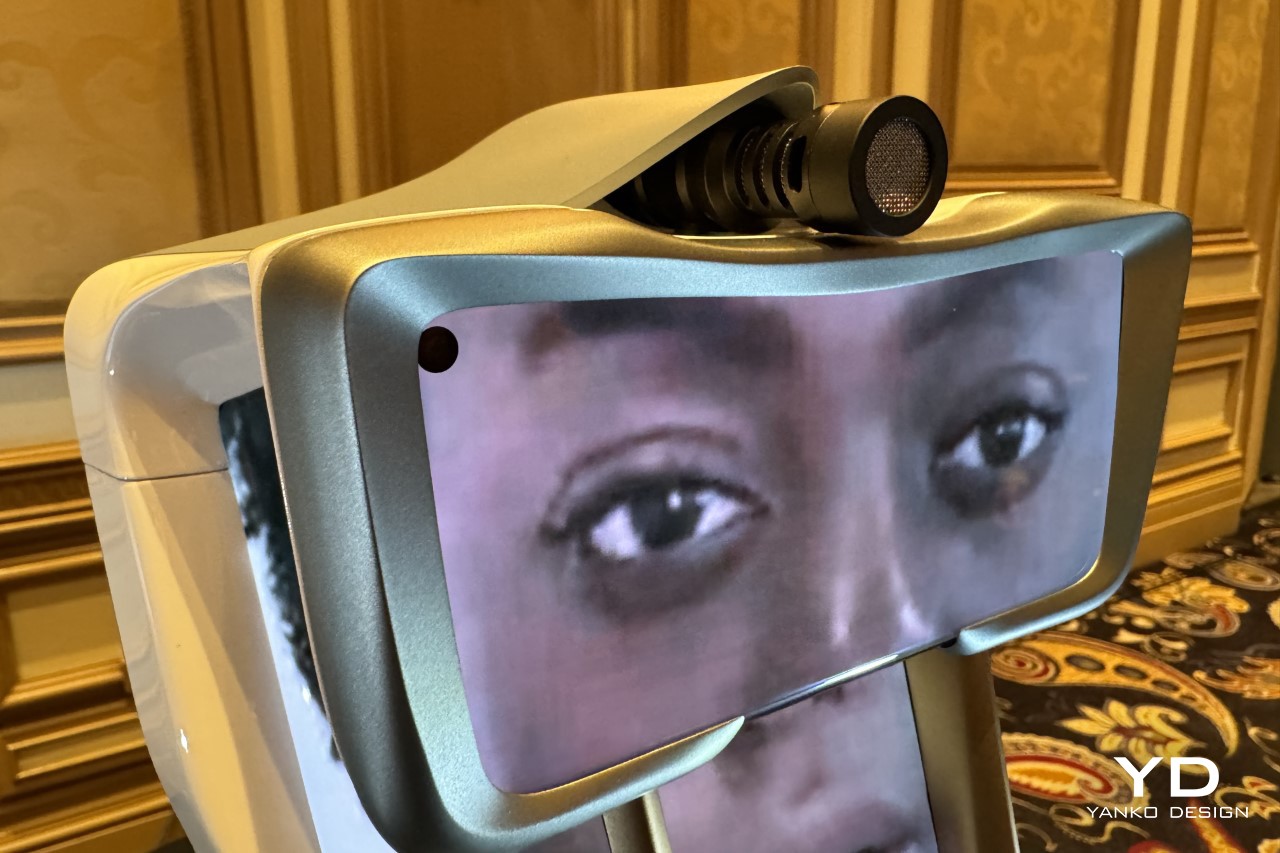

For starters, just a look at the Wehead revealed the fact that its four screens were actually smartphones assembled together into one large Macgyvered solution. The screen element with the Wehead’s eyes actually had a visible front-facing camera cutout. Above it sat an off-brand shotgun microphone that captured vocal input, and below, a small speaker where you’d expect the Wehead’s throat to be. The four screens displayed parts of the Wehead’s face, which emoted and responded to the Wehead talking, listening, and interacting.

However, even though the hardware seemed to be put together by a bunch of engineering students, the Wehead failed to deliver. Its face was perpetually pixelated, which impacted the Wehead’s already dwindling realism. There was a severe mismatch between the audio and the face’s movements, adding further problems to the mix… and finally, the Wehead just couldn’t seem to grasp anything anyone said. Sure, the event was crowded, resulting in a lot of background noise, but the Wehead still managed to fail at the basic questions it grasped. When Wehead got stuck in one of its “I’m sorry, I don’t understand” feedback loops, someone from the company came by to get it to stop responding, but it took them 3 tries to get Wehead to stop. A lot can be attributed to the general event’s background chatter, but that practically set the AI head up for failure, showing its clear lack of being able to isolate audio before processing it.

Here’s the thing though… I do think the Wehead holds great potential. It just needs a LOT of work before it can justify that price tag. For starters, maybe ditch the smartphone displays for something more unique like a curved OLED… and hide the microphone and speaker, so it isn’t that obvious that this was put together using hardware bought at Best Buy. A talking head running ChatGPT sounds impressive, but the illusion sure falls apart when it looks like a college project, and when the Wehead itself can barely pick up anything you say to it.